The promise of a $15k/month agency is just the beginning; for those aiming even higher, learning how to build a $20k/month AI Automation Agency without hiring full-time staff is the latest iteration of the digital gold rush. On paper, it sounds frictionless: identify a business process, write a series of sophisticated tokens for a Large Language Model (LLM), and charge a premium for the resulting efficiency. In practice, however, the industry is currently defined by a brutal transition from "prompting as a parlor trick" to "prompting as an operational system." Most agencies fail not because they lack technical skill, but because they sell "solutions" to problems that companies haven't yet verified as scalable.

To reach a $15k monthly recurring revenue (MRR) threshold, you must move beyond the "prompt engineer" label—which is increasingly viewed as ephemeral—and reposition yourself as an "AI Orchestrator."

The Anatomy of the $15k/mo Model

A sustainable agency in this space doesn’t sell prompts; it sells deterministic outcomes. If you are simply selling a library of copy-pasted ChatGPT snippets, you are selling a commodity with a shelf life of approximately three weeks—the time it takes for an LLM provider to update their base model and render your "jailbreak" or "specialized formatting" obsolete.

The $15k/mo benchmark is typically reached through a "Retainer + Implementation" hybrid model. You bill $5k for the initial "Infrastructure Setup" (the system design) and $10k/mo for "Maintenance & Refinement," which includes monitoring for model drift and API reliability.

The Operational Reality

Most B2B clients aren't looking for a "prompt," as they are increasingly concerned with digital stability, especially when they read reports on why decentralized labs are becoming the biggest cybersecurity weak point of 2026. They are looking for:

- Consistency: "Why did it work perfectly yesterday but return garbage today?"

- Data Privacy: "Where is our proprietary data going?"

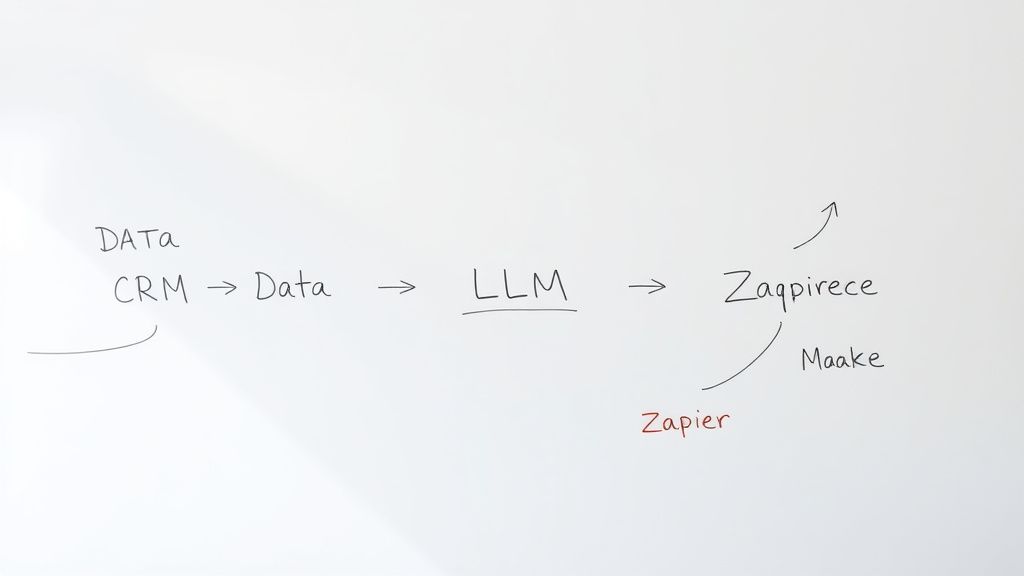

- Integration: "How do I get this result into Salesforce/HubSpot/Notion without manual copy-pasting?"

If you cannot solve the integration hurdle, you aren't an agency; you are a consultant with a hobby.

The Myth of "Prompt Engineering"

There is a growing sentiment on forums like Hacker News and specific subreddits (r/LocalLLaMA, r/MachineLearning) that "prompt engineering is dead" or "just a temporary crutch." They aren't entirely wrong. When you rely solely on natural language, you are at the mercy of the model’s "mood."

To build a high-ticket service, you must transition your logic into System Architectures. This involves:

- Chain-of-Thought (CoT) Orchestration: Moving beyond single prompts to multi-stage workflows where the output of one step validates the next.

- RAG (Retrieval-Augmented Generation) Implementation: Connecting the model to a Vector Database (like Pinecone or Weaviate) so the AI isn't hallucinating, but fetching context from the client’s actual PDF manuals and internal wikis.

- Evaluation Loops: Building a "test suite" for your prompts. If a prompt changes, how do you know it didn't break three other edge cases? You need automated testing scripts that run against a dataset of "Golden Answers."

Real Field Report: The "Scale-Up" Trap

In late 2023, a boutique firm attempted to scale by offering "Prompt Audits" for legal tech firms. They promised to reduce drafting time by 60%. The reality? The prompt worked perfectly in the sandbox, but once deployed across 50 users with varying degrees of input quality, the hallucinations spiked. The firm lost the client within two months. The issue wasn't the prompt; it was the lack of guardrails.

The lesson: Your agency must sell the guardrails, not just the prompt. You need to implement input validation (e.g., using Pydantic in Python to force the model to output valid JSON) so the downstream system never crashes.

The Economics of Trust and Retention

Why do clients pay $15k/month in an era where fractional real estate investing is changing passive income? They aren't paying for your creative writing skills, nor are they looking for analog solutions, even as top CEOs are ditching digital tools for analog clarity. They are paying for risk mitigation.

When a B2B company integrates AI, they are terrified of:

- Brand Damage: An AI chat bot telling a customer to "go to hell" or offering a $1 product instead of $100.

- Operational Stagnation: The AI breaks, and their support queue backs up for 48 hours.

- Black-box Ambiguity: The legal team wants to know why the AI made a decision.

Your value proposition is simple: "We build the safety net so your team can use AI without the PR nightmare."

Counter-Criticism: The "Prompt Engineering vs. Software Engineering" Debate

There is a vitriolic debate in the developer community regarding whether "Prompt Engineers" are actually just "Engineers with worse tooling."

The Critics' View:

"Prompt engineering is a euphemism for people who don't want to learn how to write code. Real, scalable AI applications are built via fine-tuning, RAG, and robust software engineering practices, not by tweaking adjectives in a system prompt." — Common sentiment on major tech forums.

The Pro-Service View:

"Engineers are great at building the plumbing, but terrible at interface design and nuance. Companies need 'Translation Agents'—people who can sit with a VP of Sales and translate their abstract needs into a logic tree that an LLM can parse."

The truth lies in the middle. The successful agency founder is a hybrid. You must know enough Python to debug a failed API response, but enough business acumen to explain to a CMO why "being funny" is a bad instruction for an automated email responder.

Scaling the Agency: The "Workaround" Culture

One of the biggest friction points you will encounter is the Migration Chaos. Your clients will have existing workflows (e.g., massive, brittle Excel macros or legacy database systems) that they want to keep.

Do not try to replace everything at once. This is the surest way to project failure. Instead, implement a Sidecar Pattern:

- Keep their legacy system as the "Source of Truth."

- Inject the AI workflow as a "Sidecar" that processes information in parallel.

- Gradually move the logic to the AI as trust is built.

Users will inevitably complain: "The interface is too complicated" or "I liked the old way better." Anticipate this. The biggest enemy of AI adoption isn't technical; it's change management. Your agency must provide training documentation and, more importantly, "Human-in-the-Loop" (HITL) checkpoints.

Never ship a "fully autonomous" system as your first deliverable. Ship a system that requires a human click to approve the output. Once the team builds confidence (and a track record of 99% accuracy), then flip the switch to automation.

The Hidden Costs and Fragility

If you are running this agency, prepare for the "API Hell." Models change (GPT-4o vs GPT-4, new rate limits, sudden deprecations). Your agency needs to invest in Model Agnosticism.

If you build your client’s entire infrastructure on a single vendor's specific prompt format, you are effectively a hostage to their pricing and downtime. Build your service layer to be modular. Use frameworks like LangChain or LlamaIndex to decouple the prompt logic from the model provider. If OpenAI has an outage, your agency should be able to pivot the client’s workload to Anthropic or a local model in under two hours. That is what a $15k/mo retainer buys: continuity.

FAQ

Is prompt engineering a long-term career or a bubble?

How do I price my services to reach $15k/mo?

What is the biggest failure point for new agencies?

Why do clients prefer agencies over hiring in-house?

How do I handle client data privacy concerns?

Final Synthesis: The Operational Reality

The most successful practitioners in this space are currently those who treat their prompts like code. They use Git to version-control their prompts, they write unit tests for their outputs, and they treat their client relationship like a long-term DevOps partnership.

Stop looking for the "magic prompt." There is no magic sequence of words that guarantees success. There is only the rigorous process of testing, failing, iterating, and wrapping the AI in enough code to make it behave predictably. If you sell that process, the $15k/month is not just achievable—it’s the entry-level price for the value you provide.