The promise of Decentralized Physical Infrastructure Networks (DePIN) for AI is deceptively simple: turn your idle GPU hardware into a revenue-generating node within a global, distributed supercomputer. For the individual, it sounds like the ultimate "set it and forget it" passive income stream, though those looking to truly automate their income might find more stability by exploring how to actually automate your rental portfolio in 2026. For the industry, it is marketed as a democratic revolution against the Nvidia-dominated cloud monopoly, shifting the landscape much like how global retailers are utilizing The E-commerce Loophole: How Global Retailers Are Dodging Taxes Ahead of 2026.

However, once you strip away the polished marketing decks and the venture capital buzz, the reality of leasing consumer GPUs for decentralized inference or training is a messy, hardware-intensive, and often precarious endeavor. It is a world where electricity costs, network latency, and the volatility of crypto-token rewards collide with the brutal technical requirements of modern AI models—a volatility that requires users to learn how to hedge your crypto portfolio against market crashes using decentralized options.

The Anatomy of the DePIN GPU Stack

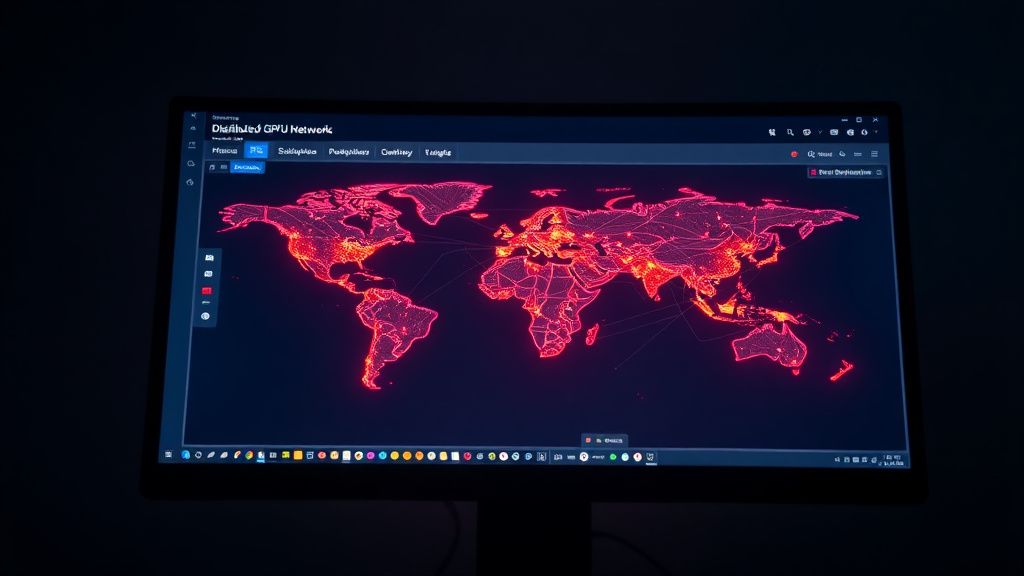

To understand why this has become a gold rush—and a minefield—you have to look at the underlying economic tension. Companies like Render, Akash, and Io.net have essentially created a marketplace for "compute cycles." In the traditional world, if you want a cluster of H100s, you pay AWS or GCP a premium. In the DePIN model, you are competing with other individuals to provide that same utility for a fraction of the cost.

The infrastructure typically relies on a three-tier architecture, an operational complexity that mimics the struggles described in Why Most AI Affiliate Funnels Fail at $10k MRR: The Hidden Operational Reality.

- The Provider (You): You provision a machine with one or more GPUs, install the provider software (often a Docker container or a Linux-based daemon), and "stake" your tokens to prove your commitment to uptime.

- The Orchestrator: This is the middleware layer (e.g., Akash’s decentralized cloud protocol). It handles the discovery process: matching a developer who needs 4x RTX 4090s with your specific machine.

- The Consumer: An AI engineer or researcher needing to run an LLM (Large Language Model) inference job or a fine-tuning task.

Operational Reality: It Is Not "Passive"

The biggest friction point in the DePIN ecosystem is the gap between the marketing claim of "passive income" and the reality of "server administration," which often feels as daunting as trying to navigate the shift in how nations are physically cutting digital borders in 2026.

When you join a network, you aren't just selling your compute; you are promising Service Level Agreements (SLAs) that are difficult for a consumer machine to meet. If your home internet jitters, or your power supply unit (PSU) trips because a job spiked the GPU load unexpectedly, you aren't just losing income for that hour; you are often incurring "slashing" penalties.

The "Workaround" Culture

Community forums like the r/GPUMining subreddit or specific Discord channels for protocols like Io.net are filled with "hacky" fixes. Users share scripts for these systems, reflecting a broader trend of technical adaptability seen in the guide on the evolution of DEX arbitrage and how traders extract alpha in 2026.

- Thermal Management: Consumer cards aren't meant to run at 95% utilization 24/7. Users are resorting to custom fan curves, under-volting via specialized scripts, and even industrial-grade case fans mounted outside the chassis.

- NAT Traversal: Getting a home machine to be "discoverable" for global inference requests without exposing it to the public internet requires complex port forwarding and sometimes VPN/tunneling setups that introduce latency.

- Driver Hell: The interplay between Nvidia’s proprietary drivers and the Docker environments used by these platforms is a frequent failure point. A routine driver update can render a node offline for hours.

Real Field Reports: The "Broken" Reality

I spoke with a contributor to a major decentralized compute network who runs a 6-node cluster in his garage. His experience highlights the systemic instability that PR materials omit.

"The software updates are constant. Last month, a deployment broke my environment because the orchestrator expected a specific CUDA version that conflicted with my base OS. My nodes were idle for three days. During that time, not only did I earn zero, but my 'reputation score' on the network tanked, which meant I wasn't being assigned high-paying jobs for the following week."

This is the hidden cost of the DePIN model: Reputation Engineering. It is a gamified system where the platform holds your potential earnings hostage until you prove your machine is as reliable as a data center.

Economic Contradictions and Tokenomics

The most common question is: "How much can I make?" The answer is almost always, "It depends on the token price and the job demand."

Most DePIN protocols pay in their own native utility tokens. This introduces Dual-Volatility Risk:

- Utilization Risk: Is there actually demand for your GPU today? If the market is flooded with supply, your hourly rate drops.

- Asset Risk: The tokens you earn are often highly volatile. A "profitable" month on paper can turn into a loss if the token price drops by 30% while you are holding your earnings.

Furthermore, there is a systemic conflict of interest. These platforms want to attract as much compute as possible, so they reward early adopters. But as supply grows, the reward per node dilutes. If you bought an RTX 4090 solely for this purpose, the ROI (Return on Investment) calculation is extremely precarious. At current consumer energy prices, many miners/providers are barely breaking even if they account for the hardware depreciation.

Counter-Criticism: Why Experts Are Skeptical

Critics within the HPC (High-Performance Computing) community argue that decentralized AI inference is fundamentally flawed for large-scale enterprise needs.

- The Latency Problem: Decentralized compute cannot compete with localized data center performance for real-time inference tasks. Data movement (egress/ingress) between the consumer node and the application is often bottlenecked by home ISP upload speeds.

- Data Privacy/Security: While most protocols use Secure Enclaves (like Intel SGX) or containerized sandboxing, there is an inherent mistrust in sending proprietary model weights or sensitive user data to a stranger’s basement PC.

- Hardware Bottleneck: A decentralized cluster of GPUs lacks the high-speed interconnects (like Nvidia NVLink or InfiniBand) that enable modern training of large models. You cannot efficiently train a GPT-4 class model across a distributed network of home machines because the latency between nodes would choke the training process.

Scaling Challenges and "The Rug Pull" Risk

The most significant operational risk is the platform's stability. In the "404 Media" and "The Verge" style of investigative reporting, we have seen numerous "compute" startups collapse when their token incentives run dry.

When a project experiences a "scaling failure"—often because they promised more capacity than the market could use—the first thing to be slashed is the provider rewards. Users who invested thousands of dollars in high-end GPUs are often left with hardware that has been hammered for months, now facing a secondary market flooded with "previously used for AI training" cards, which drives resale value into the ground.

The Community's "Workaround" Culture

For those who are committed, the community has become highly resourceful. On GitHub issues for protocols like Bittensor or Akash, you will find users who have effectively become system administrators. They build "watchdog" scripts that monitor their nodes, automatically rebooting them if the GPU temperature exceeds a threshold or if the container heartbeat fails.

The culture here is not one of "passive" investors; it is a community of tech enthusiasts who enjoy the friction of the system. If you are not comfortable reading through a kernel log or debugging a network interface after a firmware update, you are likely to be chewed up and spit out by these protocols within the first month.

Is It Worth the Effort?

If you approach this as a "get-rich-quick" scheme, you will almost certainly fail. The hardware costs, the electricity overhead, and the extreme volatility of crypto-asset markets make this a high-risk venture.

However, if you are a hardware enthusiast who already owns powerful GPUs and wants to offset the cost of your rig while contributing to an open-source AI infrastructure, it is a fascinating experiment. You are essentially acting as a "micro-cloud provider." You are at the edge of the internet, testing the limits of what a decentralized, peer-to-peer infrastructure can handle.

The industry is currently in a "hype phase," but the underlying technology is maturing. The transition from "experimental" to "enterprise-grade" is happening slowly, through painful iterations of bug fixes, security patches, and protocol upgrades.