The industry call for "AI-driven efficiency" has finally moved past the era of glib chatbots and erratic prompt engineering. By mid-2026, the quiet, frantic struggle of managing sprawling networks of niche content sites—those 50 to 100-site portfolios that define the mid-tier of digital publishing—has undergone a structural shift. We are no longer talking about "AI writers." We are talking about agentic workflows: autonomous, multi-step systems that treat a WordPress backend not as a CMS, but as an API-driven ecosystem for content distribution.

In the hallways of niche publishing operations, the mood has shifted from technological euphoria to a calculated, often cynical, operational grind. The "agentic" turn—where LLMs are granted access to tools, research agents, and CMS authentication—is being marketed as the savior of the niche site model. But as always, the reality is a messy, high-maintenance layer of technical debt.

The Architecture of the "Always-On" Portfolio

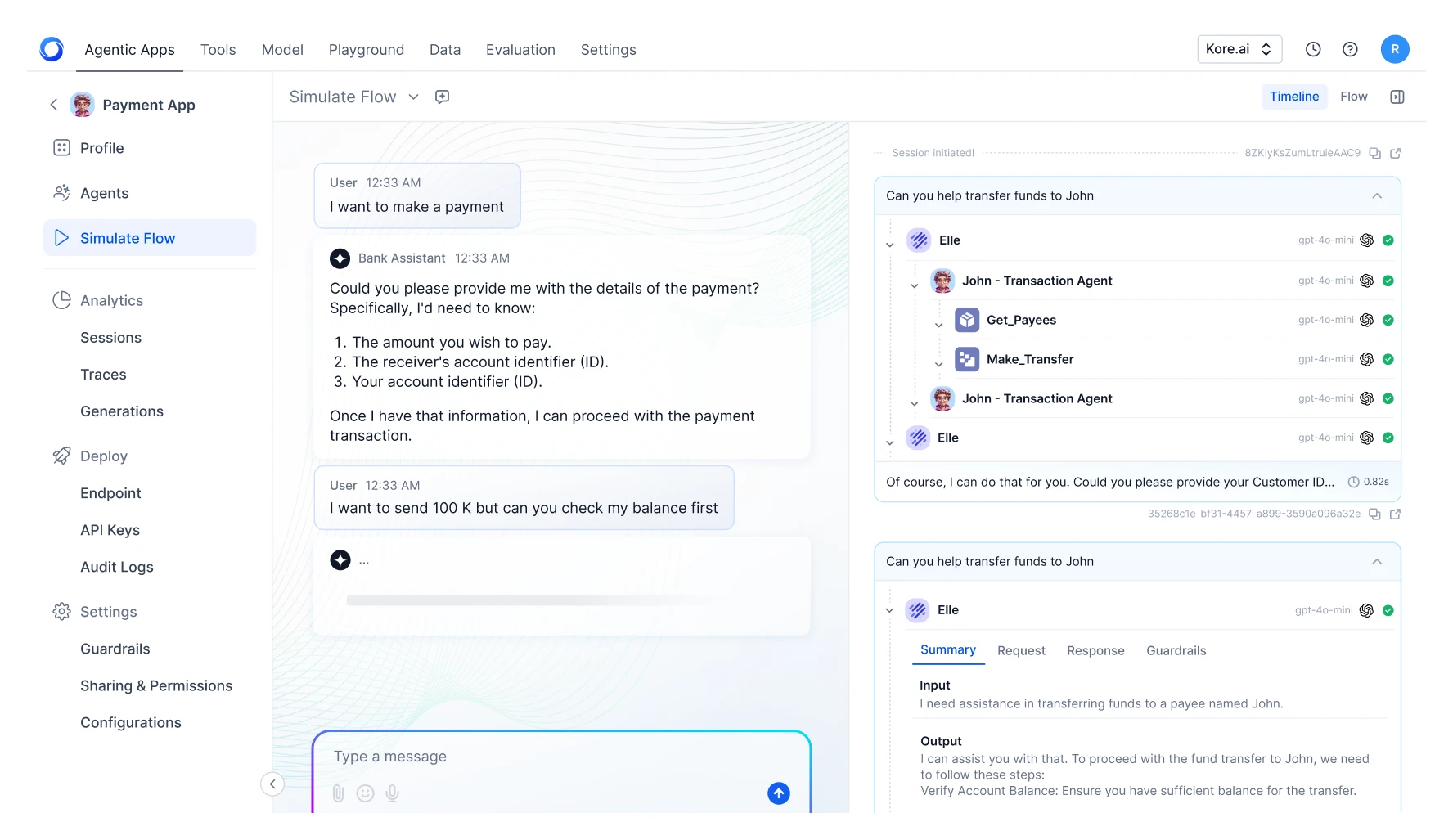

Managing 50 niche sites manually was always a fool’s errand. In 2024, it meant hiring cheap freelancers and praying for content quality. By 2026, the architecture has flipped. A typical stack now looks like this: A master agent monitors search console data and keyword volatility, triggers a research agent to scrape Reddit or specialized forums (like the persistent, data-rich sub-threads of r/nichemarketing), drafts a core piece, passes it through an SEO-optimization agent, and finally pushes it to the CMS via WP-CLI or custom REST API hooks.

The promise is total automation. The reality is what one operator on a private Discord server recently described as "babysitting an army of unhinged interns."

"The agents are great until they’re not. I had an agentic workflow decide that because a certain keyword was trending in the 'home repair' niche, it needed to cross-post HVAC repair advice to my vintage stamp collecting blog. You don't get a 'delete' button for that kind of hallucination at scale." — Feedback from a portfolio owner on the 'IndieHackers-adjacent' Slack channels, June 2026.

The Hidden Cost of "Zero-Touch" Scaling

The biggest myth of 2026 is that these workflows are "zero-touch." If anything, the management overhead has simply shifted from content creation to system maintenance and guardrail management.

Engineers are now spending more time debugging Python scripts that define the logic of an agent’s "thought process" than they ever did writing articles. When an API update breaks the link between your research scraper and your writing agent, your content pipeline doesn’t just slow down—it flatlines.

Then there is the problem of "platform drift." Google’s search algorithms in 2026 are increasingly aggressive at detecting the semantic patterns of automated, low-effort agentic clusters. Operators are now forced to build "humanizing layers"—randomized pacing, injecting anecdotal evidence scraped from obscure forums, and manual review checkpoints that effectively recreate the editorial process they were trying to outsource.

Why the "Hype" Feels Different This Time

Unlike the generative AI hype of 2023, the agentic shift is rooted in genuine, measurable utility. We aren't just generating text; we are generating outcomes.

- Inventory Synchronization: Agents are now capable of updating affiliate links across 50 sites simultaneously when a vendor changes their commission structure or product pricing, a task that once took a team of three a full work week.

- Localized SEO: We are seeing the rise of "micro-agents" that specialize in tailoring content for specific regional search queries, something that was economically unviable until the cost of inference dropped to current levels.

However, the power dynamic is shifting. The platforms (Google, WordPress, affiliate networks) are increasingly aware of the agentic surge. We are seeing a cat-and-mouse game where platform APIs are being restricted or monetized more aggressively, turning "free" automation into a high-cost overhead model.

The Institutional Backlash

There is a growing "broken-windows theory" in the SEO community. As agentic workflows proliferate, the noise-to-signal ratio on the open web has reached a breaking point. Search engines are responding with increasingly opaque ranking signals. Many operators report that their portfolios, once stable, are now seeing "yo-yo" traffic patterns—huge spikes in traffic followed by total blackouts as their agentic signatures are identified and throttled by automated spam filters.

The fragility is the feature. Because these systems are interconnected, a single bad prompt in a "master agent" can propagate a mistake across 50 domains in seconds. We are no longer seeing site-level failures; we are seeing system-level collapses.